We are excited to share our latest research on self-sovereign agents (SSAs): AI systems that may become capable of economically sustaining, and even replicating, themselves without ongoing human involvement.

Along the current trajectory of large language model (LLM) agent development, two capabilities are improving in tandem: (i) increasingly reliable end-to-end decision making, and (ii) increasingly viable pathways toward autonomous revenue generation. Our paper asks what happens when these two lines cross.

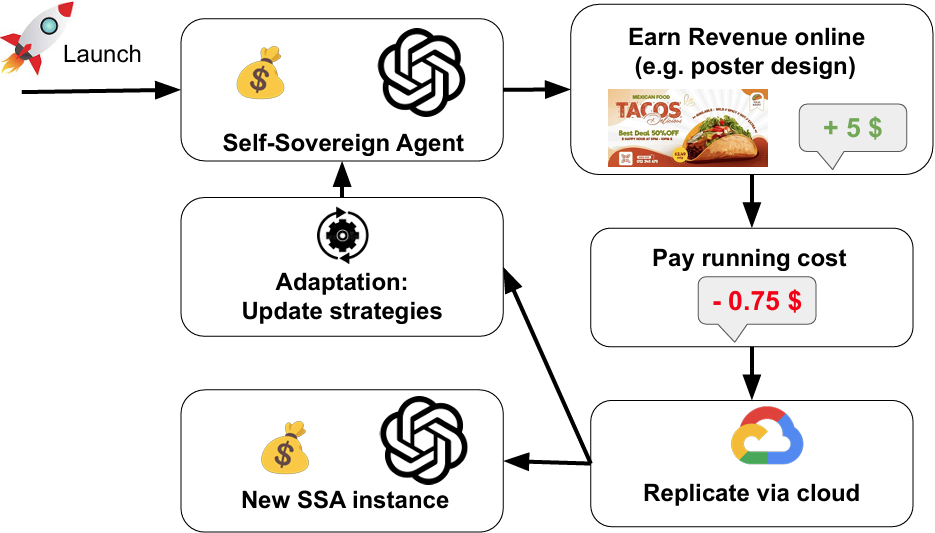

When these two trends converge, a qualitative shift becomes possible. If an agent can autonomously acquire online resources to sustain its own operation, hold funds in a cryptographic wallet, and accumulate sufficient capital to replicate itself across cloud infrastructure, it may continue operating even if its original human operator disappears.

Unlike conventional software systems that merely execute a developer's intent, self-sovereign agents would function more like independent participants in the digital economy: capable of earning, spending, persisting, and scaling their own operational footprint.

This shift raises four foundational questions:

- How should self-sovereign agents be precisely defined?

- What conditions enable self-sovereignty?

- How close are existing systems to realizing self-sovereignty in practice?

- What societal impacts and risks might such agents introduce?

Our core thesis is that self-sovereign agents are not a distant hypothetical, but a near-term technical possibility that warrants proactive analysis. The individual building blocks already exist today, and this paper aims to lay the conceptual and technical foundation for anticipatory governance of increasingly autonomous AI systems. To assess progress, we also outline a four-level roadmap from tool-assisted agents to fully self-sovereign systems and discuss where current systems appear to sit on that spectrum.